-

United States -

United Kingdom -

India -

France -

Deutschland -

Italia -

日本 -

대한민국 -

中国 -

台灣

-

Ansys is committed to setting today's students up for success, by providing free simulation engineering software to students.

-

Ansys is committed to setting today's students up for success, by providing free simulation engineering software to students.

-

Ansys is committed to setting today's students up for success, by providing free simulation engineering software to students.

-

Contact Us -

Careers -

Students and Academic -

For United States and Canada

+1 844.462.6797

ANSYS BLOG

March 13, 2020

Thermal Management Systems: How Hot Is Too Hot?

Heat management remains a critical requirement for electronics, especially in:

- Power generation

- Electrification

- Wireless 5G technologies

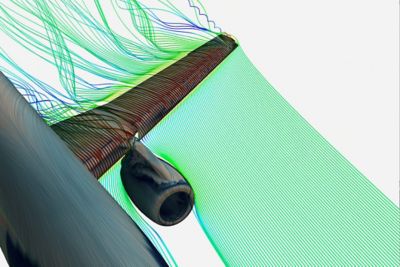

Thermal management solutions are successful when they pull heat away from sensitive and/or heat producing components. The best of these systems leverages the optimum heat transfer method — be it conduction, convection and/or radiation.

A heatsink on a central processing unit (CPU) represents one of the most common thermal management solutions. It uses a large surface area and highly conductive materials to passively reduce the temperature of a CPU. Active systems, like fans and liquid cooling, can also be employed.

To select the optimum thermal solution for electronic hardware, engineers start with either finite element analysis (FEA), like Ansys Mechanical, or computational fluid dynamics (CFD), like Ansys Icepak. They utilize these simulation technologies to accurately predict junction and case temperatures across the printed circuit board assembly (PCBA).

But how hot is too hot for these electronic components?

Traditionally, engineers determine the maximum component temperature based on datasheets and derating guidelines. However, engineering leaders are rejecting this outdated approach. Problems with derating tables include:

- The lack of cost-benefit assessments

- Determines if a component should be cooled at 78C (172F) instead of 86C (187F)

- A conservative nature that risks overdesign

- An inability to consider failure modes and mechanisms

- For instance, all CPUs, regardless of process node or package, are treated the same

- An unbalanced problem-solving effort

- Users might spend weeks creating thermal models and minutes assessing their consequences

A new workflow between Ansys Sherlock and Icepak aims to address these problems.

The Ansys Sherlock and Ansys Icepak Thermal Management Workflow

Designed to be a pre- and post-processor, Ansys Sherlock generates thermal and mechanical simulations of electronic hardware.

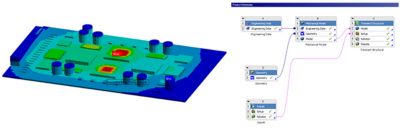

In order to fully capture the effects of the part temperature rises, the Ansys Icepak CFD results (left) are included as input for the structural FEA analysis — through the project schematic (right).

The pre-processing workflow between Sherlock and Icepak enables the creation of rapid and accurate thermal simulations.

Sherlock reads standard electronic computer-aided design (ECAD) files and then creates part-level geometry — with material properties — to represent the full-featured printed circuit board (PCB). The geometry and material selections are made by utilizing embedded part, package and material libraries.

Since a wide variety of 3D models can be exported from Sherlock into Icepak, users do not need to set up the PCBA or PCB, within Icepak. Icepak users only need to set up heat sources, thermal solutions —including heat sinks and fans — and enclosures. Next, Icepak solves for temperature rises using its solver.

PCBs can be exported as:

- Plates with lumped thermal conductivities — based on copper thickness and percentages

- Individual layers with lumped thermal conductivities

- Individual traces, planes and vias

Various electronic parts, with lumped thermal conductivities, can also be exported. Even parts without supplier-provided thermal models can be exported such as aluminum electrolytic capacitors.

Sherlock can then import thermal results from Icepak and use its reliability physics engines to predict failure mechanism-specific reliability predictions for a broad range of component technologies. These predictions enable organizations to modernize their derating process and optimize thermal solutions.

For example, should you select a $10 copper heatsink over a $5 aluminum heatsink? Buying 100,000 heatsinks over a year would make this a $500,000 decision. Derating tables will not provide insight into the benefits of each heatsink. However, Sherlock helps users quantify the reduction in warranty costs based on this selection.

Sherlock can also be integrated into a multiphysics simulation — between Icepak and Mechanical — to capture system-level interactions between enclosures and PCBAs. These interactions can cause sudden failures — especially in high power applications such as inverters for electrified drivetrains.

The integration of Sherlock and Icepak demonstrates just one aspect of the Ansys electronic reliability workflow. For more detail, watch the webinar: Ansys Icepak and Sherlock For Temperature Cycling.

See What Ansys Can Do For You

See What Ansys Can Do For You

Contact us today

Thank you for reaching out!

We’re here to answer your questions and look forward to speaking with you. A member of our Ansys sales team will contact you shortly.